Illuminating AI Choices: Benchmarking GLM-4.5V and MiniMax M1

In today’s AI world, many models claim top performance, but finding the best one for your needs can be tricky. That’s when benchmarking helps—it clears up confusion with real comparisons. Think of it as a fair test for AI models, putting them all in the same spot to show who really stands out. This benchmark covers a wide range of features, like web development, coding tasks, text-to-image generation, web searches, task reminders, speed, and more. Now, let’s learn about the new GLM-4.5V and MiniMax M1, and see how they stack up against leading models like ChatGPT 5, Gemini 2.5 Pro, Claude, Grok, and others.

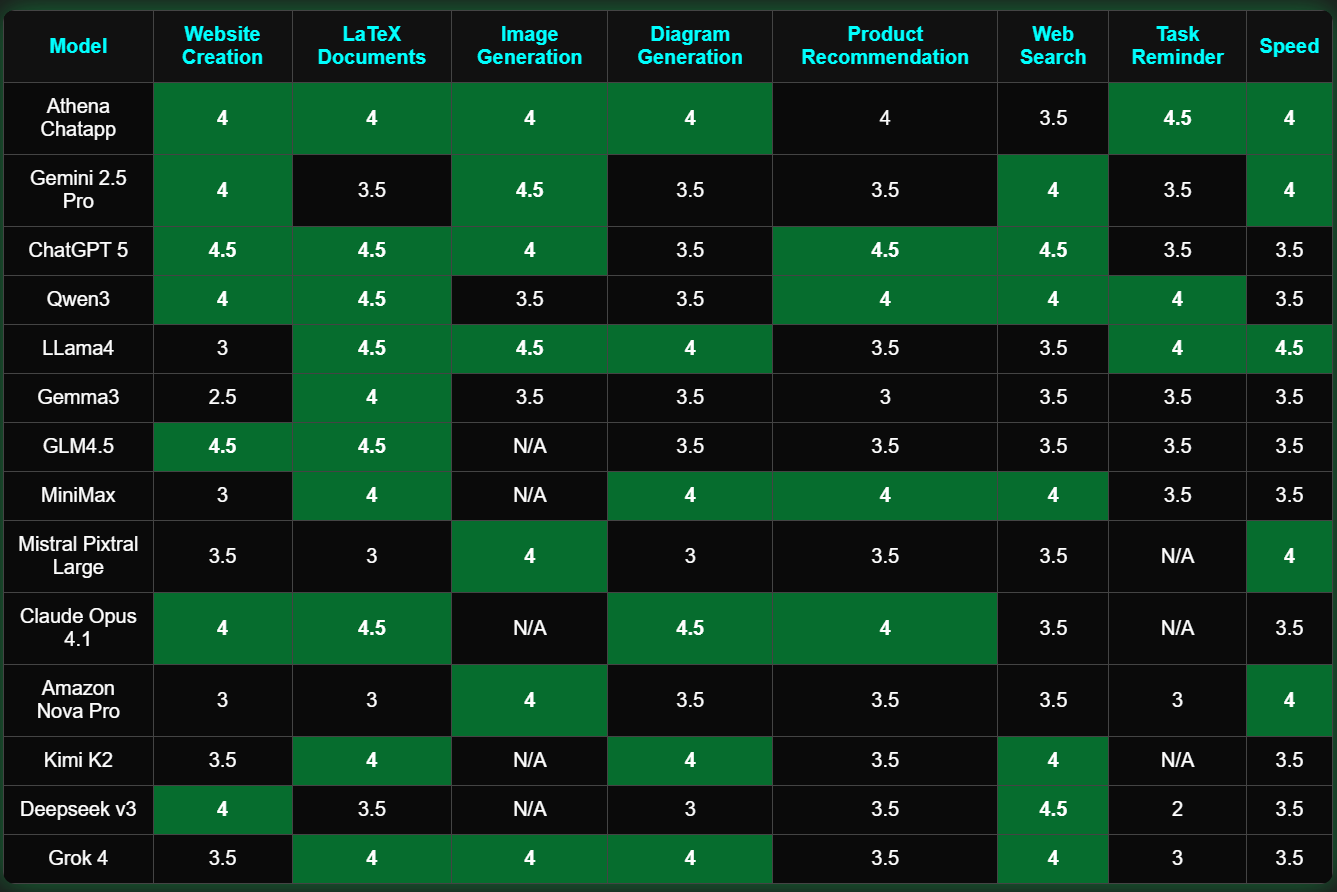

GLM-4.5, engineered with an impressive 355 billion total parameters and 32 billion active ones per forward pass, stands as a cornerstone model tailored for agentic operations. It distinguishes itself in coding, adeptly constructing projects from the ground up and resolving challenges within established frameworks. The frontend interfaces it produces boast superior usability and visual allure, resonating deeply with user preferences. In web development, GLM-4.5 shines with its web replication capability, mirroring website images with near-flawless accuracy, complemented by its prompt-to-website generation that ensures both operational efficiency and aesthetic . It also performs remarkably in LaTeX document creation, effortlessly producing materials featuring quizzes and intricate diagrams. That said, it lacks image generation features and could refine its prowess in web search and product suggestion areas.

MiniMax M1, boasting 456 billion parameters and a remarkable 1 million token context window, redefines performance boundaries. Its open accessibility, extensive context handling, and computational thriftiness mitigate key hurdles for experts overseeing large-scale AI infrastructures. This model thrives in diagram creation, offering great visuals, and excels in product recommendations, web searchs, and creating LaTeX document crafting with notable finesse. These attributes make it a reliable for technical pursuits requiring depth and accuracy. However, opportunities remain for advancement in web development and task reminder and image generationfunctionalities, where it could further align with user expectations.

Across the benchmark, ChatGPT 5, Gemini 2.5 Pro, and GLM-4.5V demonstrate exceptional aptitude in web development, delivering functional and appealing outputs. Grok and Gemini stand out for task reminders, providing timely and effective prompts that enhance productivity. In image generation, LLaMA, ChatGPT 5, and Gemini 2.5 Pro lead with vivid and high-quality results. Claude, MiniMax, and Kimi K2 shine in diagram generation, creating clear and detailed representations. Meanwhile, Gemma exhibits potential for growth in web development, while DeepSeek and Mistral could bolster their diagram generation to better compete.

Different models sparkle in varied domains, each contributing unique strengths to the AI ecosystem. Athena ChatApp masterfully integrates these highlights, excelling in intricate assignments like web development, LaTeX document generation, and image creation, while seamlessly managing simpler duties such as product recommendations, travel itineraries, web searches, and task reminders. Athena’s open accessibility, long-context prowess, and computational efficiency tackle persistent obstacles for professionals handling AI at scale—discover how Athena elevates productivity whether at home or in the workplace. With unwavering commitment to evolution, Athena continually introduces fresh capabilities and enhancements to support your everyday endeavors, positioning it as the premier, dependable solution for efficient outcomes

Join the Athena Community!

Athena isn’t just an AI—it’s a growing community of people who love working smarter. Want in? Connect with us here:

💬 Discord: Join the conversation

📺 YouTube: Watch Athena in action

📸 Instagram: Follow for updates

🎶 TikTok: Check out AI-powered tricks

💼 LinkedIn: Connect professionally